A cheaper AI model is good enough for production when the task is repeatable, the judgment standard is documented, and the model has clear escalation rules. If the model has to invent the process, use the stronger model. If the model is applying a process you have already defined, the cheaper model may be enough.

I learned this the expensive way first.

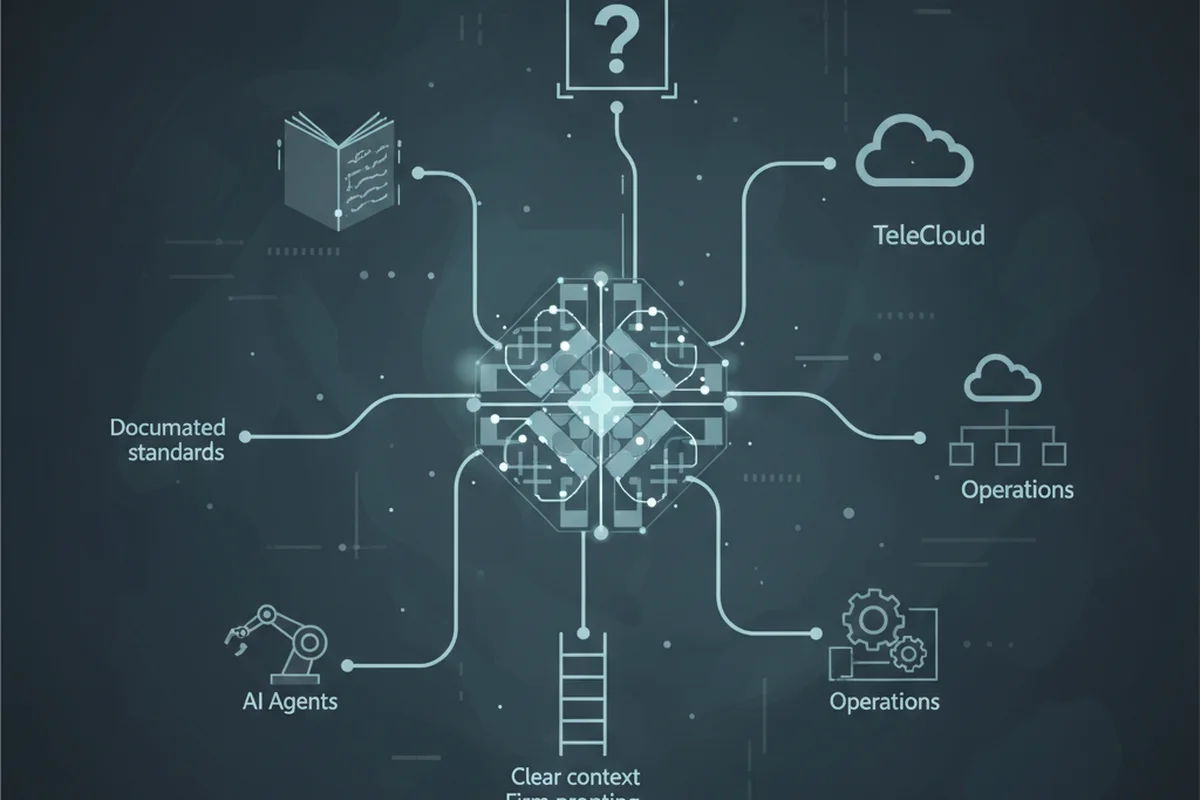

At TeleCloud, we run an agentic operating system that actually touches internal operations. This is not a toy demo where an agent summarizes a blog post and everybody claps. We have agents doing useful work against real service processes, and one of the clearest examples is quality control for support tickets.

We are in the service industry, and we care a lot about white-glove service. That phrase sounds soft until you look at the operational burden behind it. Someone has to check whether the ticket tells the truth. Someone has to make sure the work was actually completed. Someone has to read the notes and decide whether another technician could understand the issue a year from now.

That “someone” was usually a service team lead.

And it was eating a ridiculous amount of time.

What does “good enough” actually mean for an AI model?

“Good enough” does not mean the model feels smart in a chat window.

It means the model can perform a defined job at an acceptable quality level, at an acceptable cost, with a clear path for exceptions. That last part matters. Production work is not about getting one impressive answer. It is about getting the same kind of answer over and over without quietly drifting into nonsense.

For our QC agent, the job was not “look at this ticket and vibe-check it.” That would be too vague. The job was much narrower: inspect the ticket against our standard, identify missing or weak information, decide whether it passed QC, and escalate anything the agent should not handle.

That is the first real dividing line.

If the model has to decide what “good” means, you probably need the stronger model. If the model is applying a documented definition of good, the cheaper model has a real shot.

How did we make Haiku useful in production?

We did not start by asking Haiku to be brilliant.

We started by documenting the judgment that already existed inside the team. I used Claude Cowork to analyze 5,000 existing service tickets and look for what separated good tickets from bad ones. We already knew the answer in the general sense: clear problem, clear work requested, clear work performed, root cause, and follow-up steps if needed.

But “we know it when we see it” is not enough for an agent.

So we turned that judgment into four context files. The QC rubric explains how the agent should evaluate tickets every time. The escalation rules define what the agent should not try to handle. The known patterns file captures what we saw across those 5,000 historical tickets. The standards file describes what good looks like in our environment.

That is where the whole thing changed.

At first, I assumed the QC agent would need Sonnet. The work felt too nuanced. It had to read a service ticket, understand whether the work matched the request, spot missing information, and decide whether the issue needed escalation.

Sonnet handled it well.

Then I looked at the cost curve and thought: if this works, it is going to run constantly. Ticket volume adds up. A small cost per ticket becomes a real line item when the agent moves out of “cool experiment” territory and into daily operations.

So I tried Haiku.

I expected the quality to fall off.

It really did not. For this job, with this amount of structure around it, the output was nearly identical.

The cheaper model was not better than expected because it was secretly a genius. It was better than expected because we stopped asking it to guess.

When should you not use the cheaper model?

I would not use the cheaper model for open-ended business judgment.

I do not want Haiku inventing policy. I do not want it deciding what white-glove service means. I do not want it rewriting the operating model for a department because it noticed three examples and got confident.

That is where people get themselves in trouble. They see a cheaper model succeed at a structured task and immediately stretch it into a judgment role.

That is not what I am arguing for.

The pattern that works is narrower: use the cheaper model when the task is repeatable, the success criteria are already written down, the agent has examples, and there is a clear escalation path when the work falls outside the lane.

In other words, do not ask the cheap model to be the manager. Ask it to be the trained reviewer with a checklist, a playbook, and permission to raise its hand.

What role should the smarter model play?

This is where the architecture matters.

We now have three agents running Haiku. But the Chief of Staff agent, the orchestrator watching the system, runs on Sonnet.

That agent can see what the other agents are logging in the observability dashboard. It can notice repeated failures. It can communicate across agents. If the QC agent says the triage agent did not gather enough detail, that observation gets written up to the Chief of Staff.

If that happens three times in seven days, the Chief of Staff reviews the pattern and asks the question I actually care about: does the triage agent need more context in its prompt, or does its context need to be rewritten entirely?

That is a better use of the stronger model.

I do not need Sonnet checking every ticket if Haiku can apply the rubric. I want Sonnet watching the system, finding recurring failures, and improving the agents that do the repetitive work.

How much cheaper was it in real life?

I was wrong about the token cost.

Watching Claude Code work can mess with your intuition here. You ask it to change a UI, it compacts two or three times, and you start imagining every production workflow chewing through tokens like that all day.

That was not what happened with QC.

Today we processed 62 tickets through the QC flow for a total cost of $0.74.

I do not know how I would ever get a human QC process down to $0.74 for 62 tickets. And honestly, that is not even the main point. The goal is not to brag that the robot is cheap. The goal is to stop using a team lead’s attention on first-pass review when an agent can handle the structured part and escalate the exceptions.

That is the production value: cheaper review, faster feedback, and fewer low-level checks landing on the people who should be coaching, designing process, and handling exceptions.

What is the practical rule for choosing a model?

My rule now is simple: start by asking whether the work has enough structure for the cheaper model to succeed.

If the task is vague, politically sensitive, customer-facing in a risky way, or dependent on judgment that has not been documented yet, I reach for the stronger model. If the task is repetitive and the standard is written down, I test the cheaper model earlier than I used to.

That shift matters because most companies are going to overspend on AI in one of two ways. They will either use the strongest model for everything because it feels safer, or they will use the cheapest model for everything because it looks better in a spreadsheet.

Both are lazy.

The better answer is routing. Let cheaper models do structured work. Let stronger models handle ambiguity, orchestration, and system improvement. Then measure the output instead of arguing about the model name.

I used to think model choice was mostly about intelligence.

Now I think it is mostly about system design.

The model matters. But the operating system around the model matters more.